Having a positive body image is frequently in the media both online and offline. Body positivity is the belief that all people should have a positive body image, and it challenges the ways in which society presents and views the physical body. Body positivity does not come naturally to many people. We are constantly bombarded with images of what is and isn’t acceptable in relation to body image — the media tells us what is socially desirable. For the majority of people, this is not their reality.

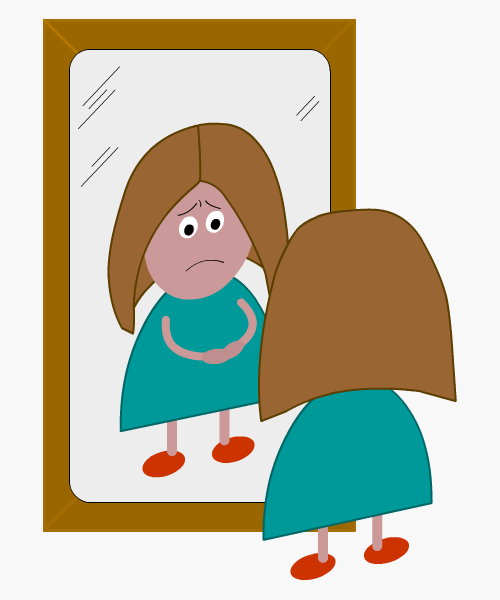

Body image is how you see yourself both visually and mentally. This includes how you believe you appear when looking in the mirror as well as your mental view of yourself. Having a positive body image is largely linked to one’s self-esteem and confidence. Individuals with low self-esteem often have a negative body image. Others suffer from body dysmorphia, where their perception of their body does not match reality. The unrealistic expectations of beauty and body image regularly displayed on social media only add to the number of people with insecurities and negative views of their own body image.

The power of positivity and having a positive mindset helps to improve self-esteem and negate body image issues. Making positive changes to diet and nutrition along with a realistic fitness routine can dramatically improve how people view themselves. People who have a positive body image are not vain or big-headed, and they do not necessarily love everything about the way they look. The important thing is to accept who you are and embrace your individuality and body shape.

The true meaning of a positive body image is acceptance. You can always make positive changes to improve your health and fitness, but self-esteem and inner confidence are key. Happy, genuine people are always attractive and likeable.